This week in general felt a bit slower as everyone was bussy digesting the new model releases from last week, especially GPT-5. But that doesn’t mean that it was a boring week at all.

In the Deep Dive section we will continue on with our evaluation, by starting to introduce a couple of evaluation metrics.

News

Google introduces Imagen 4 Fast + Imagen 4 through their API. Imagen is Google’s image generation model, but this model isn’t available in the free tier via the API.

Google also released Gemma 3 270M a very small language model that is open-weight and ready to be used on very limited hardware.

Meta AI Research has released DINO v3, a new universal vision model that can do Classification, Retrieval, PCA, Detection, Segmentation and Depth.

Would you be interested in digging into a model like DINO in a feature edition?

Articles

A great and realistic take on best practises around building agents today.

Tversky Neural Networks, a new deep learning paradigm based on a psychologically realistic model of similarity. By replacing traditional linear projection layers with new Tversky projection layers, the authors show significant improvements in accuracy (up to 24.7% relative gain on image classification), parameter efficiency (34.8% fewer parameters for a language model), and interpretability.

Deep Dive

This week we will be introducing evaluation metrics to our scripts from last week in order to be able to make better decisions on if a model is good enough for out task or not.

A reminder before we start, if you aren’t caught up on the Deep Dives please use this resource to do so. And if an abbreviation isn’t clear, there is a good chance that it is explained in the Glossary.

We are going two be tracking two major metrics here:

Accuarcy, which percentage of the question did the LLM get correct.

Prompt Adherence, how cloesly the LLM stuck to our requested answering format - this is often also called instruction following.

Accuarcy is rather easy to calculate as we just need to measure how many answer the LLM got correct and divide that by the total amount of questions we asked. Prompt Adherence is a bit more complicated as we will need to come up with answer form judgments. Two ideas came to my mind for this very simplistic test:

Check that the answer is one of the four a, b, c or d - and important is that we check for lowercase - for the Accuracy we just put everything to lowercase as we only care about the correctness.

Check the amount of characters in the answer, this is of course very closely related to the first one, but just imagine the LLM were to respond with aa for some bizare reason.

We calculate the mean of both and then divide by two to reach the final score for prompt adherence. In addition I thought I also capture the run time of our evaluation and capture the tokens (as they are important for cost monitoring).

As base for the implementation last weeks SAS and Python code has been modified and extended.

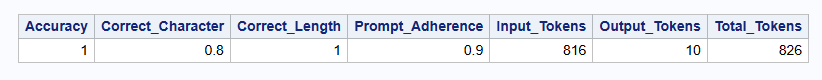

The updated SAS code produces the following result:

And the updated Python code result looks like this:

Evaluation completed in 4.03 seconds.

Accuracy: 100.00%

Prompt adherence correct character: 80.00%

Prompt adherence correct length: 100.00%

Prompt adherence: 90.00%

Input tokens: 786

Output tokens: 10

Total tokens: 796Next week we will be shifting gear and start to implement a first application.